Feeling Productive ≠ Being Productive

There is a moment I have heard described more times than I can count. A psychologist, a coach, a knowledge worker opens their laptop, fires up their AI tool of choice, and feels a quiet surge of confidence as they start their working day. They are working smarter now. They are ahead of the curve. They are one of the people who have "figured this out".

That feeling, it turns out, may be one of the most psychologically interesting lies we are currently telling ourselves.

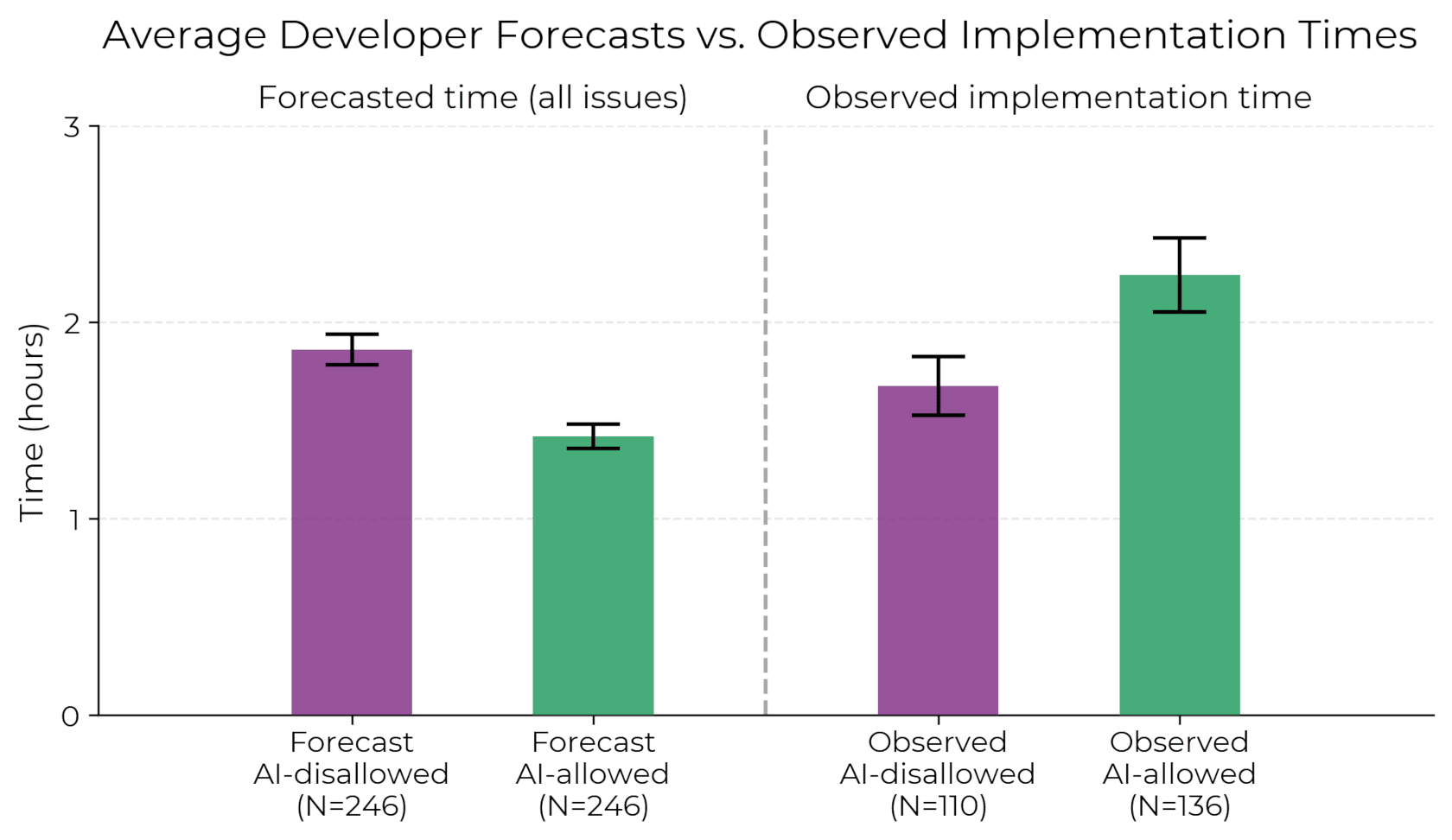

In July 2025, researchers at METR published a study that deserves far more attention than it has received in psychology and coaching circles. In a carefully designed randomized controlled trial, sixteen experienced software developers, people who had contributed for years to large open-source repositories averaging over a million lines of code, were randomly assigned to complete real tasks either with or without AI tools. The results were striking. When developers used AI, they took 19% longer to complete their work than when they worked without it (Becker, Rush, Barnes, and Rein, 2025).

AI made them slower.

But here is where it becomes psychologically fascinating rather than merely technically interesting. Before the study, developers predicted that AI would speed them up by 24%. After the study, having just lived through the slowdown, they still believed AI had accelerated them by 20%. The measured reality and the felt experience pointed in opposite directions, and lived experience lost.

We need to sit with that for a moment.

Why Our Brains Cannot Accurately Judge AI Performance

This is not a story about AI being bad. It is a story about a predictable and well-documented failure in human self-assessment under conditions of novelty and complexity.

The phenomenon has a name in the psychological literature: illusory superiority, or more precisely in this context, a form of overconfidence that emerges when we lack accurate feedback loops (Kruger and Dunning, 1999). When we cannot clearly measure our own performance, we substitute feeling for data. And AI tools are exceptionally good at generating the feeling of productivity, which is not the same thing as the fact of it.

Consider what AI interaction actually delivers moment to moment. It responds instantly. It produces confident-sounding text. It removes the friction of starting. It mimics the sensation of intellectual partnership. These are not trivial things. They are psychologically potent. The problem is that they are rewarding the process rather than the outcome, and our brains are poorly equipped to notice the difference in real time.

This maps onto what self-determination theory tells us about intrinsic motivation. Ryan and Deci (2000) established that humans have deep needs for competence, autonomy, and relatedness. AI tools create a compelling simulation of all three. You feel competent because the output looks sophisticated. You feel autonomous because you are directing the interaction. You feel a kind of relatedness because the system responds to you as if it understands you. The emotional signature of AI-assisted work can feel like mastery even when the measurable output tells a different story.

The Competence Paradox in Professional Practice

In my own research on what I call the Competence Paradox, I have documented a consistent pattern across clinical and coaching psychology: over 50% of practitioners now use AI tools in some form, yet fewer than 11% can explain in any meaningful way how those systems actually function (Van Zyl, 2026). We are adopting at the speed of comfort and integrating at the speed of comprehension, and those two speeds are not the same.

The METR study surfaces the same paradox in a different domain. The developers were not naive. They were experienced. They had used AI tools for dozens to hundreds of hours before the study. And still, they overestimated their AI-assisted performance by roughly 40 percentage points when measured against what actually happened.

This is what I mean when I argue that technological humility is not a soft value. It is a cognitive prerequisite for competent AI use.

When Removing Friction Removes the Work That Matters

There is a deeper psychological layer here that the study does not address directly but that its data strongly implies. When we use AI, we are not simply adding a tool to our workflow. We are entering into a fundamentally different relationship with our own thinking. We outsource the friction, and friction, it turns out, is where much of the cognitive work actually happens.

Research on desirable difficulties in learning, pioneered by Robert Bjork (1994), shows that the conditions that feel most productive in the moment are often least conducive to actual skill development and quality output. The struggle is not a sign that something is wrong. It is frequently where depth is built. When AI removes the struggle, it may also be removing the depth, and we feel better precisely because we have avoided the hard part.

For psychologists and coaches, this has a direct clinical translation. If we are using AI to draft formulations, generate intervention plans, or summarize session notes, and we feel more efficient in doing so, we owe it to our clients and to our professional integrity to ask an honest question: is the feeling of efficiency tracking actual quality, or is it tracking the comfort of reduced effort?

The METR developers could not accurately answer that question about themselves even after the experiment was over. What makes us think we can?

The Question We Are Not Asking Often Enough

The question I want to leave with you is not whether to use AI. That question is effectively settled. The question is whether we are building the psychological infrastructure to use it honestly, which means developing the capacity to separate the feeling of productivity from the evidence of it, to resist the emotional rewards of AI-assisted fluency, and to maintain the kind of critical self-assessment that competent practice has always required.

Technological humility begins with a simple and uncomfortable acknowledgment: the confidence you feel when working with AI is a data point about your psychology, not your performance.

Those are different things.

And right now, most of us are confusing them.

Reference

Becker, J., Rush, N., Barnes, E., and Rein, D. (2025). Measuring the impact of early-2025 AI on experienced open-source developer productivity. METR. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

Van Zyl, L.E. (2026). The competency paradox: When psychologists over-estimate their understanding of artificial intelligence. AI & Society, 1-15. 10.1007/s00146-025-02814-9