You are sitting across from someone in a development centre. You have known them for three hours. Three hours of structured exercises, a panel interview, maybe a psychometric battery.

And now you are about to write a report that shapes the next five years of their career.

You know, somewhere in your gut, that three hours is not enough. The version of this person you saw today, under artificial pressure, performing for an audience, is not who they are at work on a Tuesday.

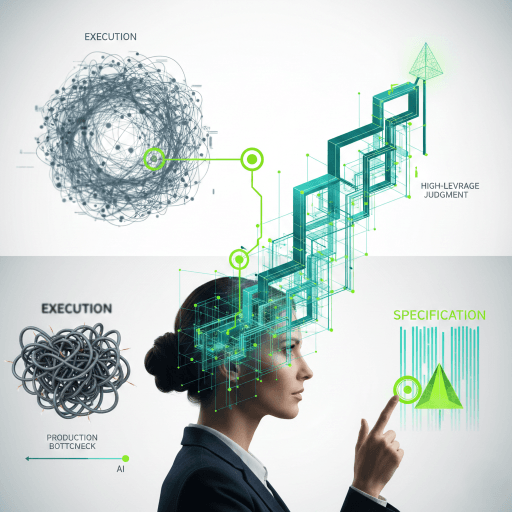

Every assessment ever built shares the same architectural flaw. It meets a person for the first time and tries to understand them in a single sitting. We have been writing reviews of people based on one page of their story. The science is excellent. The architecture is wrong.

We measure people in episodes when they exist in streams.

The Introvert Problem

Two people apply for the same business development role.

Person A is highly extroverted. Builds relationships through energy and charisma. Commands the room. Closes deals over dinner. Exceeds targets consistently.

Person B is deeply introverted. Builds relationships through research, written proposals, and long-term trust. Listens more than they talk. Closes deals through persistent value delivery. Also exceeds targets consistently.

Both are excellent. They activate entirely different capabilities to reach the same outcome.

Now the assessment centre. The capability profile says: high extraversion, persuasive communication, strong interpersonal presence. Because that is what the literature says a good salesperson looks like.

Person A sails through. Person B is automatically disqualified.

Not because they cannot do the job. Because the instrument cannot see how they do it.

A fixed assessment built for one activation pattern penalises the other. An agentic assessment generates a different instrument for each of them, because it understands how each person actually approaches the problem. That is what personalised assessment means. Not a friendly colour scheme. A different instrument.

The Statistical Ghost

We built a science of talent for people who do not exist. The average person is a statistical ghost.

This is not a metaphor. Population norms describe nobody in particular. When your instrument is calibrated for a person who does not exist, it predicts nobody accurately. Every norm-referenced score describes the average. The average does not walk into your assessment centre.

We assess unique individuals with identical instruments. Then we wonder why 30% of hires fail in the first year. This is not a measurement problem. It is an architecture problem. And it requires an architectural solution.

What if it had a living model of their capabilities built from eighteen months of continuous signal, and it used that model to decide what to measure, how to measure it, and what to generate?

That is what we are building. Let me show you the architecture.

Four Structural Failures

Current talent assessment suffers from four architectural limitations that no amount of better content can fix.

- Static snapshots. Assessments capture a moment in time, then treat it as permanent. People change. Contexts shift. The assessment stays frozen.

- Population averages. Every score is normed against a group. The individual trajectory is invisible. What works in general can be precisely wrong for the person in front of you.

- One-size-fits-all. Every candidate gets the same questions in the same order. The assessment cannot adapt. It measures compliance with a fixed format, not actual capability.

- They just don't work. Many assessments carry significant cultural, gender, and socio-economic bias. They show poor face validity, limited concurrent and predictive validity, and in some cases, they are producing results that are simply inaccurate.

These are not bugs. They are architectural limitations.

The Four Eras of Assessment

Assessment has evolved through four architectural eras.

- Era one (1950s to 2010s): standardised. Fixed items, fixed order, population norms. Everyone gets the same test.

- Era two (2010s to 2020s): computer adaptive. Item difficulty adjusts based on performance. Better, but still the same item bank. Still population parameters.

- Era three (2020 to 2025): AI-enhanced. AI does the scoring, conducts interviews, generates reports. Faster, but the architecture underneath is still static content measured against group averages.

- Era four (2026 onward): agentic assessment built on digital twins. The system builds a living model of the individual, generates assessment content on demand, and adapts the entire methodology per person. No two assessments are alike.

The real discontinuity sits between eras three and four. Eras one through three improved efficiency. Era four changes the architecture. That is a different kind of shift entirely.

And here is the adoption gap that should concern everyone: 99% of hiring leaders now use AI somewhere in their talent process (HireVue, 2025). About 5% can tell you how the model generates its recommendations, what data feeds it, or what happens when it is wrong. That is 94 points of pure adoption without architecture. The gap is where the risk lives.

We have the adoption. We do not have the architecture.

ATLAS: The Adaptive Twin-Led Assessment System

ATLAS stands for Adaptive Twin-Led Assessment System. It is a five-layer architecture we are building at Psynalytics that fundamentally rethinks how we assess people.

At its foundation, seven continuous data streams feed a digital twin: a seven-layer computational model of the individual that integrates psychological traits, cognitive patterns, behavioural rhythms, performance data, and contextual factors into a living, evolving representation. That twin runs 20,000 simulations before the person answers a single question, mapping where competence holds and where it breaks.

An eight-step agentic assessment chain then generates an entirely unique assessment for that specific person, from scratch, calibrated to their industry, their role, their growth stage, and the way they actually work. No item bank. No pre-written scenarios. Every question, every simulation, every scenario is created in real time by specialised AI agents that have become domain experts in the content being assessed.

Once the evidence is collected, a swarm of up to a thousand evaluator agents, each seeded with a different theoretical prior and domain lens, independently scores the evidence and then enters structured adversarial debate rounds, challenging each other's conclusions across multiple rounds.

The output is not a score. It is a structured disagreement map that shows where the swarm converged, where it split, and why the divergence zones contain the most diagnostic information in the entire assessment. A governance layer built on the AI-IARA framework preserves six irreducible human capacities throughout, ensuring that no algorithmic recommendation overrides human judgment without explicit, informed consent.

And the whole system feeds back into itself: the twin informs the agents, the agents inform the swarm, the swarm updates the twin. A living system, not a pipeline.

Five layers, one continuous feedback loop, and a governance framework that keeps human sovereignty over every decision.

Layer 1: Multi-Source Live Stream Data

Assessment has always relied on episodic data. A survey once a year. An interview once in a career. A test on one specific day. That gives you one page of a person's story.

Live stream data is continuous signal from the systems people already use. Calendar density and meeting patterns. Email cadence and sentiment. Collaboration patterns across platforms. Task completion rhythms over time. Seven data streams flowing into an integration layer, processing continuously.

The critical insight: most organisations are already sitting on 80% of the data they need. It is already being collected. It is just not being integrated.

Layer 2: The Digital Twin Engine

Most people hear "digital twin" and think dashboard or avatar. A twin is neither.

A talent digital twin is a dynamic, computational model of an individual that integrates psychological, behavioural, contextual, and performance data into a continuously evolving representation. It is a living hypothesis about a person. An evolving model that learns. A simulation environment for potential.

The twin has seven layers: functional (role history, qualifications), psychological (traits, values, motivational drivers), cognitive (problem-solving, reasoning), behavioural (communication patterns, work rhythms), physiological (opt-in wearable data like sleep and HRV), performance (KPIs, delivery, ratings), and ecological (team dynamics, organisational stressors, context).

Seven layers. Thousands of live data points. Continuous.

With a real twin, you can run 20,000 simulations before the person ever opens the assessment. You already know where competence holds and where it breaks. You already know what to probe and what to generate.

Your digital twin should never be more certain about you than you are about yourself. This is a safety principle, not just a design choice.

Layer 3: The Agentic Assessment Engine

Before I explain the assessment chain, four terms need to be precise. They get used interchangeably in vendor meetings. They are not the same thing.

- An LLM is a prediction engine. Prompt in, response out. No memory, no goals, no autonomy.

- An agent is an LLM with tools, memory, and a goal. It can search, decide, and act.

- An agentic system is multiple specialised agents orchestrated together. Each becomes a domain expert. They design methodology, generate content, and adapt in real time.

- Swarm intelligence is different entirely. Thousands of agents spawned on demand. If one does not know something, it creates another to go learn it and come back. Intelligence emerges from the collective.

When a vendor says "we use AI agents," ask them which of these four levels they mean.

The ATLAS assessment chain has eight steps. The system becomes a world-leading expert in the content domain. It becomes an expert in measurement design for this specific construct and this specific person. It defines evaluation criteria. It generates assessment content on demand, from scratch, nothing from a bank, nothing pre-written. It implements the assessment, adapting in real time. It spawns a swarm of evaluators. It synthesises a diagnostic with visible uncertainty. And it generates personalised development content structured for how this specific person learns.

The assessment agent does not draw from a pre-built item bank. It synthesises domain expertise in real time. For a fintech scale-up assessing strategic leadership, the agent researches the fintech regulatory environment, analyses the company's growth stage, identifies the specific leadership tensions at that scale, and generates scenario-based assessments calibrated to that exact context.

No two assessments are identical. And the reason is not randomisation. The reason is that the system has read the twin, understood how this specific person approaches problems, and designed an assessment that tests how they actually work.

That is the difference between adaptive item selection and agentic assessment design. One adjusts difficulty. The other reinvents the instrument.

Layer 4: Swarm Evaluation

Swarm intelligence is not a panel of four or six named agents with titles. It is thousands of agents spawned on demand.

Each one is seeded with a unique evaluation persona, a different theoretical prior, a different weighting of evidence, a different lens on competence. An I/O psychology specialist. A psychometric expert. A cognitive scientist. A hiring manager proxy. A fairness auditor. A behavioural analyst. And critically, a set of devil's advocates whose only job is to challenge every conclusion.

Three phases. Spawn, debate, signal.

The agents evaluate the same evidence independently, then enter structured adversarial debate rounds. An agent that rates someone as strong in strategic reasoning must defend that rating to three other agents with different priors. Consensus that survives adversarial challenge is more defensible than consensus that was never challenged.

Then the system maps convergence and divergence. Where 87% of agents agree, you have a finding you can act on. Where they split 52 to 48, you have the most diagnostic information in the entire assessment.

Every assessment system you have ever used treats disagreement as failure. The swarm treats it as signal.

The divergence zones are not noise. They are the questions the hiring manager should be asking in the interview. The single number is what the vendor sells. The divergence zone is where the value lives.

The output is four things: a capability map with confidence intervals per competency, a disagreement report showing where agents diverged and why, an uncertainty landscape with visible confidence bands (the system tells you what it does not know), and personalised development pathways derived from the divergence zones.

One thousand agents debated. Four rounds of adversarial challenge. Three divergence zones flagged. Zero single scores produced.

Layer 5: Human Governance (AI-IARA)

If an AI system claims to assess human capability, who accepts psychological responsibility when it is wrong?

The AI-IARA framework, published in the Journal of Positive Psychology, identifies six irreducible human capacities essential for wellbeing and agency under algorithmic conditions. Each is operationalised as an engineering requirement, not an abstract ethical principle.

Awareness: the ability to notice when algorithms influence us. Interpretation: the ability to generate our own meaning. Intention: the ability to choose our own values before optimization chooses them. Action: the ability to translate intentions into behaviour. Relational Agency: the ability to maintain authentic human connection. And Autonomy: the ability to consciously adjust AI reliance.

These six capacities either strengthen or atrophy depending on how AI systems are designed. They are design requirements, not aspirations.

The Feedback Loop

ATLAS is a living system, not a pipeline. The twin informs the agent. The agents inform the swarm. The swarm updates the twin. Every pipeline architecture fails because the output of each stage is frozen before it reaches the next. ATLAS feeds back into the twin. The twin is always the most current version of the person.

The Introvert, Revisited

Remember Person B from the beginning?

Inside the ATLAS architecture, the multi-source data already shows that this person builds trust through written communication and strategic follow-up. The digital twin has modelled their approach for eighteen months.

The agentic assessment does not ask them to role-play a cold call. It generates scenarios that test how they actually work.

The swarm evaluates their effectiveness against actual business development outcomes, not against a generic capability profile designed for the extrovert.

And the simulation discovers something the fixed assessment never could: Person B outperforms Person A in complex, long-cycle B2B sales. The introvert closes larger deals, retains clients longer, and generates more revenue per account.

A fixed assessment would have eliminated them in round one. ATLAS found the signal the old system was designed to miss.

Monday Morning Actions

Three things you can do this week.

Audit one assessment. Pick your highest-volume assessment. Map every point where it treats individuals as interchangeable. Count the assumptions baked into the fixed format. That count is your opportunity cost.

Map your data streams. List every data source already collected about your people. Performance systems, 360 feedback, engagement surveys, learning platforms, communication patterns. Most organisations are sitting on 80% of what they need for a digital twin foundation. The data exists. The integration does not.

Define safety boundaries first. Before building anything, answer: What should this system never be allowed to decide about a person? Who has override authority? What happens when the model is wrong? Where does human judgment remain non-negotiable?

Five Questions for Every Assessment Vendor

Photograph this list. Ask these in your next vendor meeting.

- Does your system model each individual, or compare against averages?

- Can it generate original content, or select from a fixed bank?

- How does it validate generated content in real time?

- What happens to data after the session? Who owns the model?

- If the system makes a wrong call, who accepts responsibility?

If they cannot answer these five questions, they are selling yesterday's technology in today's packaging.

The Most Dangerous Number

The most dangerous number in assessment is a single number. It compresses a human being into a coordinate on someone else's dimension.

We started with three hours across from someone in a development centre. Three hours to write the next chapter of their life. And a system that could only see one version of who they are.

The architecture I described here gives you a system that already knows the person, that generates an assessment for who they actually are, that preserves the complexity a single number destroys.

AI should expand human agency, not reduce people to coordinates on someone else's dimension.

That is the principle that governs everything we build.