Why this discipline exists

Most responsible-AI work treats psychology as a values discussion. Engineers build the system. Ethicists review the principles. Lawyers paper the contracts. Psychology gets invited to the conversation only after a deployment goes wrong: a hiring tool turns out to discriminate, a wellbeing app turns out to harm, an AI coach turns out to make users worse. By then the cost of fixing the system is an order of magnitude higher than the cost of designing it correctly in the first place.

AI psychology rejects the values framing. It treats people-impact AI as a measurement problem first and a technology problem second. It applies the same psychometric standards that have applied to any people-impact measurement instrument since the 1950s, and it answers four questions before the system goes near a hiring panel, a wellbeing programme, or a clinical workflow. Does the system perceive the right context. Does it interpret the signal correctly. Does it act with proportionate authority. Does it preserve the user's autonomy and social agency.

Read the full definition on the AI psychology hub.

Three concrete contributions

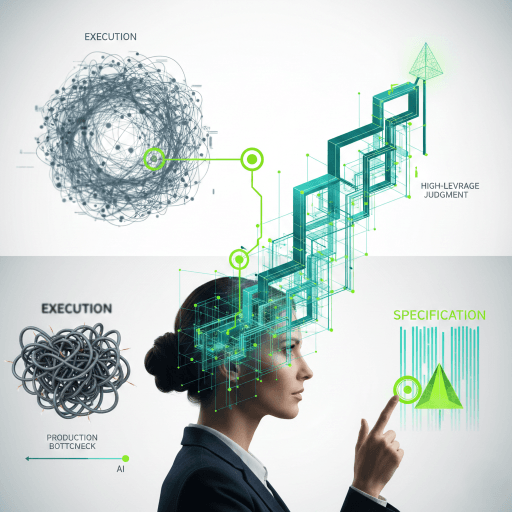

Psychology adds three things to AI design that ethics frameworks alone cannot.

Construct definition before training. Engineers usually start with available data and reverse-engineer a label. Psychology starts with the construct. What does culture fit mean. What does engagement mean. What does wellbeing mean. Until those constructs are defined in language an independent psychometrician can review, every score the system produces downstream is undefined.

Subgroup measurement, not just subgroup outcomes. Adverse impact ratios alone are insufficient. They can hide real bias when populations differ. Psychology brings measurement invariance (does the test mean the same thing across groups), differential prediction (does the score over-predict for one group), and floor and ceiling effects to the fairness conversation. These are not optional once the system makes decisions about people.

Contestability as engineering, not policy. A person scored by an AI system has the right to see the result, question it, and appeal it. Without a procedural appeal path, the system is not deployable in any high-stakes setting in any jurisdiction with a meaningful AI Act. Contestability is a design layer that lives in the product, not a footnote in the privacy policy.

Why this matters now

Three pressures are converging. The EU AI Act treats AI in employment, education, and access to essential services as high-risk; high-risk systems require documented validity, fairness, and post-deployment monitoring. The US disparate-impact framework already applies to any selection tool, and class-action discovery is rapidly catching up to AI products. Reputational exposure compounds: a single visible failure damages the brand for years.

Without AI psychology in the design loop, the audit-ready evidence each of these pressures asks for does not exist when it is asked for. The cost of producing it after deployment is one to two orders of magnitude higher than the cost of producing it during design.

What to read next

If you build or buy AI assessments, start at the buyer-facing hub: AI-driven assessments. It walks through what an audit produces using a worked example of an AI hiring tool.

If you want to score your own system, run the AI-IARA self-assessment. It produces a risk dashboard in about 15 minutes.

If you want the formal methodology, the framework is published in the 2026 Journal of Positive Psychology paper linked from the AI psychology hub.