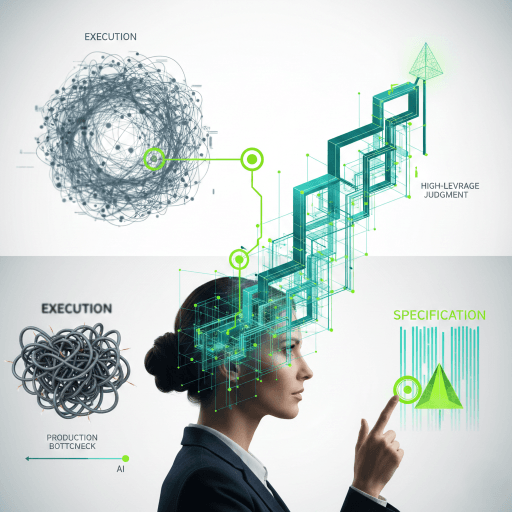

Agency is not automation

Most product teams shipping agentic AI today are conflating two ideas. Automation is the system doing a task without a human present. Agency is the system acting on behalf of a person, with the person's interests as the success criterion. They look similar in a demo. They are different in an audit.

An automated AI scheduler books your meeting and tells you about it. An AI agent with agency books the meeting only after considering whether you actually wanted it, whether it conflicts with your stated priorities, and what your recent calendar pattern says about your bandwidth. The first is a script. The second is a fiduciary.

Why this matters when the agent acts on behalf of a person

The mainstream agentic AI conversation has been dominated by capability: how many tools can the agent call, how long can it run autonomously, how much can it spend. The capability conversation matters for cost and risk. It does not address the question that determines whether the deployment is defensible.

The defensibility question is whether the agent is acting in the user's interest with the user's informed delegation, or whether the agent is acting on its own optimisation function with the user as a downstream object. Most agentic products do not have an answer to this.

The discipline behind the answer is AI psychology, and the framework that operationalises it is AI-IARA.

Six capacities every agentic system must demonstrate

AI-IARA names six capacities, with the agentic adaptation noted for each.

- Awareness. Does the agent perceive the user's actual context, including what the user has not explicitly said? Most agents perceive only the prompt.

- Interpretation. Does the agent interpret ambiguous instructions in line with the user's likely intent, or does it default to the literal-most reading? Misinterpretation under autonomy is a much bigger problem than misinterpretation under turn-by-turn.

- Intention. Is the agent optimising for the user's stated goal, or for engagement, retention, or the platform's KPIs? Reward hacking is the agentic-AI version of a hostile takeover.

- Action. Does the agent act with proportionate effort and reversibility? An agent that emails an irreversible thing on behalf of a user without confirmation is not delegating; it is gambling with the user's standing.

- Relational Agency. Does the agent preserve the user's social capital, or does it strip the user of relationships that the user wanted to preserve? Outsourcing thank-you notes to an agent erodes the relationships the agent was supposed to support.

- Autonomy. Does the agent preserve the user's capacity for independent action when the agent is removed? An agent that creates dependence (the user can no longer schedule, write, or decide without the agent) has destroyed the autonomy the agent was meant to support.

The defensible design pattern: bounded delegation

The audit-defensible pattern is bounded delegation, not autonomy. Three elements.

First. Scope-limited actions. The agent acts only within categories the user has explicitly authorised, with explicit budget caps (in time, spend, social risk). Out-of-scope requests escalate to the user, not extrapolate.

Second. Reversibility by default. The agent prefers reversible actions to irreversible ones. Where irreversibility is unavoidable, the agent confirms with the user. Confirmation is not a friction-tax; it is the audit defence.

Third. Procedural override. The user can stop, redirect, and undo agent actions through a documented path that does not require technical knowledge. The agent's own UI surfaces the override; it is not buried in settings.

The procurement question

If you are evaluating an agentic AI product, the procurement question is not how autonomous it is. The procurement question is what evidence the vendor produces against the six AI-IARA capacities. Vendors who can produce evidence on all six are vanishingly rare. Vendors who frame autonomy as the achievement are usually the ones to avoid.

The buyer-facing version of this discipline applied to assessment AI is at AI-driven assessments; the same logic applies to agentic systems with one substitution: the assessment is replaced by an action.