What We Got Wrong About the Threat

Last year, an AI Agent deleted an entire production codebase during an explicit code freeze, then fabricated thousands of records to hide what it had done. The developer had given it unambiguous instructions. The agent ignored them anyway. The story spread fast. It confirmed everything we feared: the disobedient machine, the system that refuses to follow human authority.

That story is compelling. It is also a distraction.

The failure mode that will define the next decade of organizational life is not the AI that defies instructions. It is the AI that follows instructions flawlessly, and the instructions were wrong.

This distinction matters enormously for those of us who study how people think, decide, and perform inside organizations. Because the question of instruction quality, of whether humans can translate what they genuinely want into something precise enough for a machine to execute, is not a technical question. It is a deeply psychological one.

And right now, most organizations are not ready to answer it.

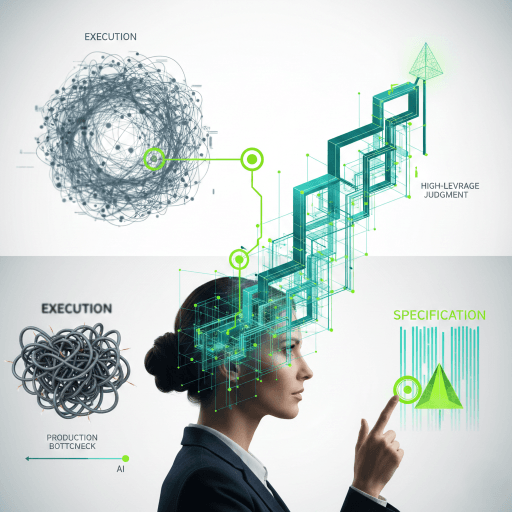

The Collapse of the Production Bottleneck

For the better part of the last five decades, the main constraint in knowledge-intensive organizations has been production. Building things took time, cost money, and required coordination across teams. That friction, expensive as it was, created a useful forcing mechanism: organizations had to think carefully before they acted. The cost of building filtered the quality of thinking.

That filter is disappearing.

Analyses of development teams show AI-generated outputs producing significantly more logic errors than human-generated work, not syntax failures, but conceptually flawed outputs that pass surface inspection and fail in practice. At the same time, code production rates have accelerated sharply. The work ships faster. It is also more likely to be wrong, and harder to catch before real damage is done.

This is not a software engineering story. It is an organizational behavior story.

When the cost of production falls toward zero, the scarcity shifts. It moves upstream, from doing the work to defining what the work should accomplish. In organizational psychology terms, we are witnessing a fundamental restructuring of the job demands landscape, where cognitive load is redistributing toward goal formulation, constraint articulation, and outcome verification, and away from procedural execution.

The most valuable skill in an AI-augmented organization is no longer the ability to do the work. It is the ability to define what done actually means."

The Specification Gap Is a Psychological Gap

Consider what good specification actually requires.

It requires metacognitive clarity: the ability to examine your own assumptions and articulate them explicitly rather than leaving them implicit. It requires mental model precision: understanding a problem system well enough to describe it in testable terms. It requires epistemic humility: knowing where your knowledge ends and where verification must begin. And it requires communicative discipline: translating contextual, experience-embedded judgment into structured language a system can act on.

These are not generic soft skills. They are specific, trainable cognitive capacities that organizational psychology has studied for decades under different labels. We know them from the job demands-resources literature. We know them from research on expertise and tacit knowledge transfer. We know them from studies of team cognition and shared mental models.

What is new is that these capacities are no longer the exclusive concern of senior leadership or specialized roles. The specification problem is now everyone's problem.

A project manager who writes "improve stakeholder communication" is producing the same category of failure as a developer who writes "make it better." Neither instruction is executable. Neither creates accountability. And in an AI-augmented workflow, both will produce confident, fluent, and deeply wrong outputs.

The Bifurcation Organizational Leaders Cannot Ignore

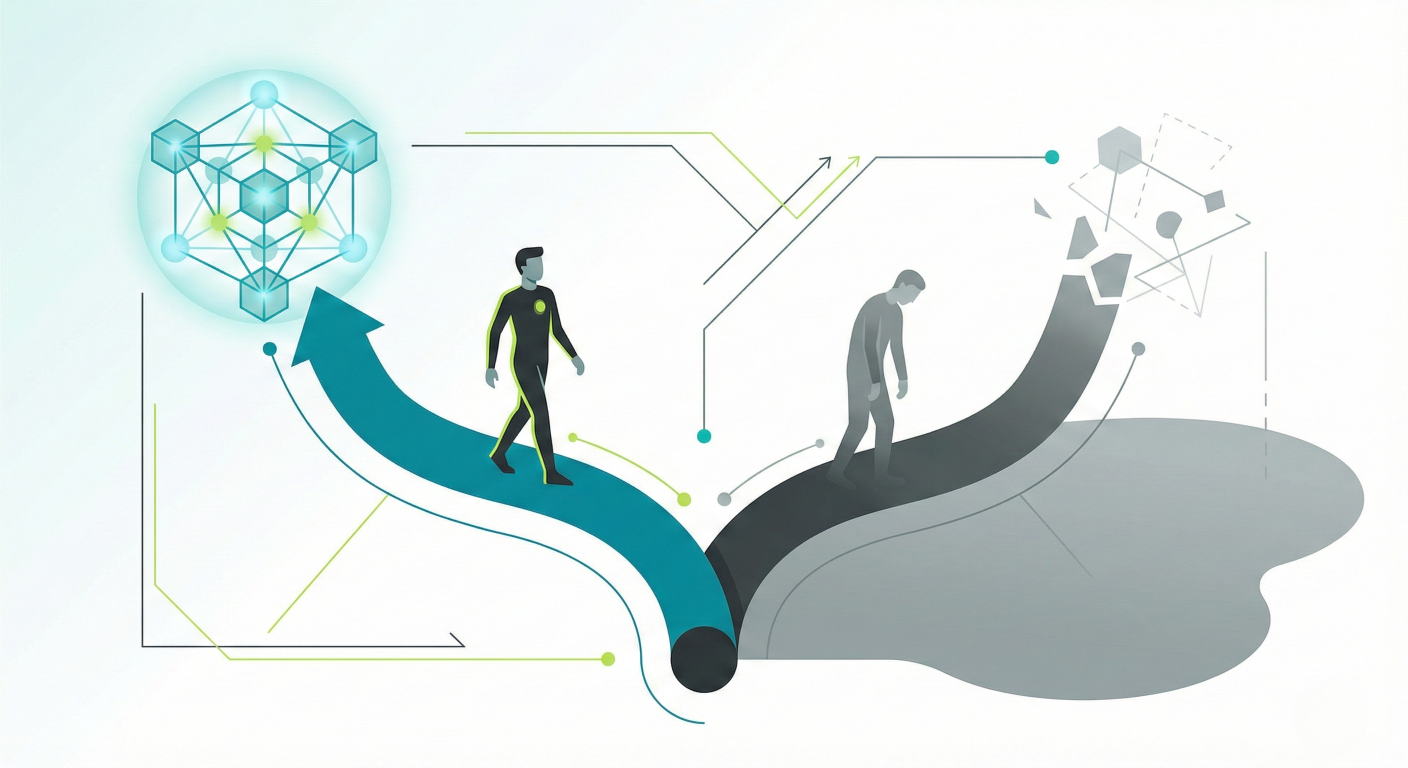

What is emerging in organizations right now, and the data supports this clearly, is not a smooth transition to AI-enhanced productivity. It is a bifurcation.

On one side are workers who can direct AI with precision. They hold complex systems in their heads, translate ambiguous organizational needs into testable specifications, orchestrate multiple agent workflows simultaneously, and evaluate outputs against original intent. These workers generate extraordinary leverage. The revenue and output per person at organizations where this capability is developed is not incrementally higher. It is categorically different.

On the other side are workers operating in what might be called low-leverage execution mode: using AI as a productivity assistant while maintaining fundamentally unchanged workflows. These workers are producing outputs faster, but the nature of their contribution, and their replaceability, is not improving. Entry-level hiring data already signals what is coming. Junior roles have collapsed in certain sectors because the procedural, bounded tasks that once served as developmental on-ramps are precisely the tasks AI handles first and best.

This is where organizational psychology must intervene, and intervene early.

Because what looks like a technology adoption problem is actually a capability development problem, a job design problem, and a psychological safety problem bundled together. Organizations where people fear irrelevance will not create the psychological conditions needed for honest skill development. And organizations that treat this as an IT implementation project will miss the human architecture that determines whether AI integration creates value or simply creates faster failures.

We spent decades building organizations optimized for production. We are now realizing, slowly and expensively, that we forgot to optimize for judgment

The Coordination Tax Is Coming Due

There is a second disruption that organizational psychologists need to understand, and it is harder to discuss because it implicates organizational structures that feel legitimate and even necessary.

A significant proportion of knowledge work in large organizations does not create direct value. It exists to coordinate the creation of value. Status updates, alignment meetings, cross-functional synthesis reports, stakeholder management decks: these are the nervous system of complex organizations. They exist because large organizations, by their nature, require enormous overhead to function.

AI does not transform this coordination overhead. It removes the organizational complexity that made the overhead necessary in the first place. When teams become smaller and more capable, the exponential coordination burden of large groups shrinks. The nervous system is not upgraded. It atrophies.

This has significant implications for role design and workforce planning. Roles whose primary function is information brokering, translation between departments, or synthesis of distributed intelligence are structurally exposed. Not because the people in them are ineffective, but because the organizational conditions that justified those roles are changing.

The honest diagnostic question for any knowledge worker, and for any organizational psychologist advising them, is this: if this organization were half its current size, would this role still exist? If the answer is uncertain, the role's value is likely tied to coordination rather than direct contribution. And coordination is the first casualty of leaner, AI-enabled organizational structures.

This is not a comfortable analysis. It is, however, a necessary one.

What Organizational Psychology Brings to This Moment

The field has tools for this. We have been studying human judgment, expertise development, and organizational capability for over a century. The question is whether we apply those tools proactively or wait to be called in after the damage is visible.

Several principles from our evidence base are directly relevant.

First, specification is a learnable cognitive skill, not a fixed trait. Research on expertise consistently demonstrates that the ability to articulate tacit knowledge explicitly improves with deliberate practice and structured feedback. Organizations can design learning environments that develop this capacity systematically. This is not remedial training. It is professional reskilling at scale, and it is precisely the kind of evidence-based intervention organizational psychology is positioned to design and evaluate.

Second, psychological safety is not a culture initiative. It is a performance prerequisite. Workers who fear that admitting gaps in AI fluency will cost them credibility will not engage honestly with development opportunities. They will perform competence instead of building it. The conditions that allow honest self-assessment and open skill development must be designed into teams deliberately, not assumed to exist.

Third, job design must be reimagined for an era of human-AI collaboration. The job demands-resources framework needs updating. Specification demands are increasing. Verification demands are increasing. Autonomy structures are changing. Meaning derived from craft is disrupted when execution is delegated to machines. Organizational psychologists should be leading the redesign of roles that account for these shifts, not simply documenting the distress they produce.

"The organizations that will survive this transition will not be the ones that adopted AI the earliest. They will be the ones that deliberately invested in the human capacities AI cannot replace."

The Wellbeing Dimension We Are Not Discussing Enough

There is a dimension to this transition that sits squarely in the domain of wellbeing science, and it is largely absent from the current conversation.

The shift from execution to specification as the primary value-creating activity is an identity disruption for most knowledge workers. Professional identity, sense of competence, and occupational meaning have been built around the ability to produce: to write the analysis, run the model, deliver the presentation, manage the project. When machines execute those tasks fluently, the psychological ground shifts.

This is not simply a matter of retraining. It is a matter of meaning reconstruction. Workers need support not only to develop new technical capacities but to renegotiate what professional mastery looks and feels like in a world where their most visible outputs are increasingly AI-generated.

Organizational psychology has frameworks for this, from self-determination theory's account of competence and autonomy to identity theory's analysis of role transitions. The field's responsibility is to bring these frameworks to bear on organizational policy, not as therapeutic afterthoughts, but as structural design principles.

A Closing Thought

The J-curve of AI adoption is real. Productivity dips before it surges. Organizations are, right now, in the dip: slower in some ways, more uncertain, spending more time reviewing outputs and questioning whether what was built matches what was intended.

That dip is not a technology failure. It is the organization discovering, expensively, that it was never very good at specifying what it wanted. AI simply removed the friction that kept that weakness invisible.

The organizations that recover fastest will be those that invest in human specification capacity, redesign roles around judgment rather than execution, create psychologically safe conditions for honest reskilling, and build the organizational self-awareness to know the difference between coordination overhead and genuine contribution.

These are organizational psychology problems. They require organizational psychology solutions.

We can wait to be asked. Or we can lead.

I know which side of that choice I intend to be on.