AI Assurance and AI Governance

AI assurance is independent, evidence-based evaluation of whether an AI system is safe, lawful, reliable, and fit for its stated purpose in its real deployment context. AI governance is the operating system around the AI that makes those claims controllable over time through clear accountabilities, documented decisions, risk controls, and continuous monitoring.

As featured on

The discipline behind this work is AI-Driven Assessments. The methodology is the AI-IARA framework.

Run the AI-IARA auditAbout This ServiceAI Assurance and AI Governance

Many AI products ship with performance numbers but weak evidence about validity, bias, misuse risk, or downstream harm. Assurance closes the evidence gap. Governance keeps the system inside its intended boundaries after launch. The outcome is decision-grade clarity on what the system can claim, a documented risk position tied to concrete controls and owners, practical remediation actions sequenced by severity, and monitoring design so safety and compliance do not depend on best intentions.

Get in Touch

The AGILE Assurance Model

Six components we evaluate to produce a decision-grade assurance package.

Asserted claims and intended use

We audit the system’s stated purpose, decision impacts, and prohibited uses so evaluation targets the real risk.

Governance and accountability

We map decision rights, escalation paths, documentation duties, and change control so responsibility is unambiguous.

Integrity of data and measurement

We test whether inputs and labels are defensible for their use, including construct alignment where human attributes are measured or inferred.

Lifecycle risk controls

We assess how risks are identified, measured, mitigated, and re-checked across build, deployment, and updates.

Legibility and contestability

We verify that outputs are explainable at the level required by users and regulators, and that people can challenge or correct outcomes.

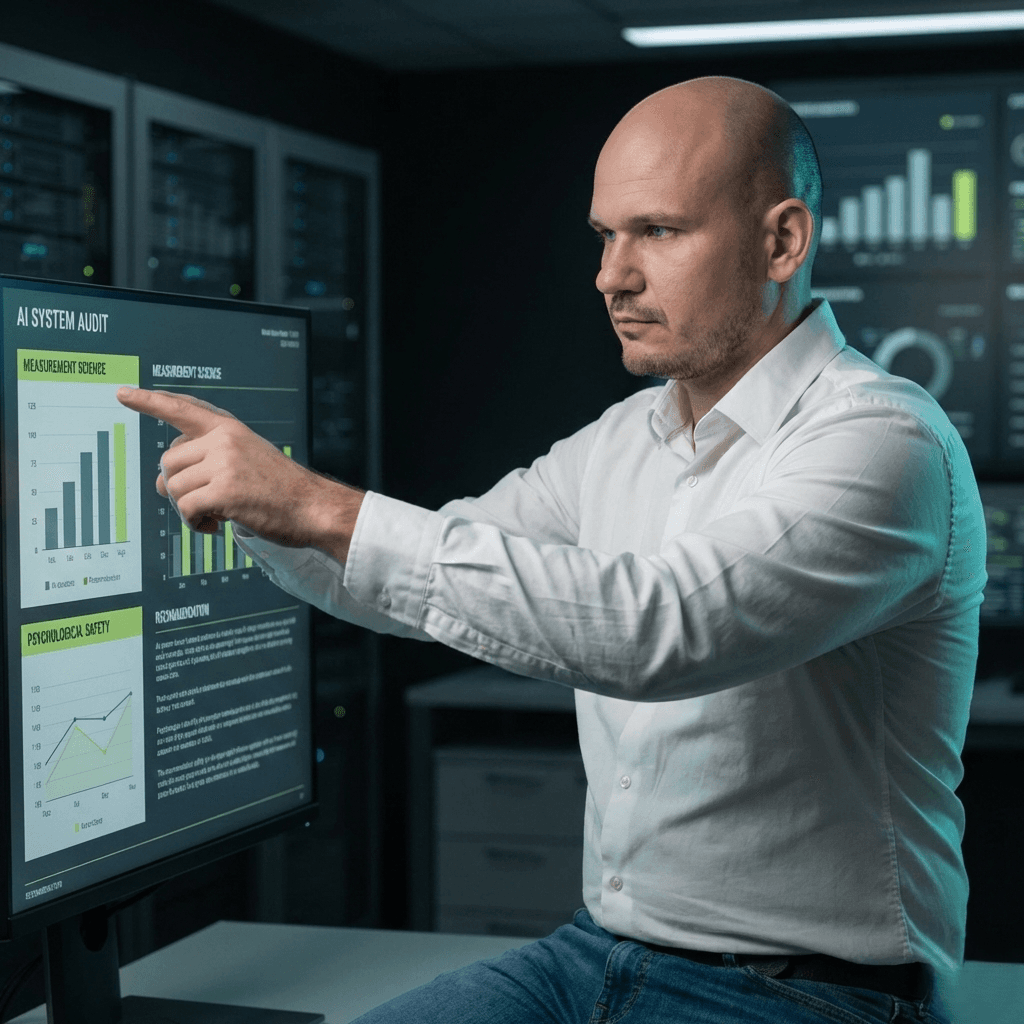

Evidence and monitoring

We define the minimum evidence standard for release, then specify drift, bias, and incident monitoring with trigger thresholds.

What You Receive

A decision-grade assurance package with clear evidence, risk scores, and actionable remediation.

Our Approach

A structured, step-by-step methodology tailored to every engagement.

Scope

Confirm system claims, deployment context, affected populations, and decision criticality. Define evidence thresholds and the assurance boundary.

Map the System

Test and Evidence

Design Governance

Deliver Package

Who This Is For

This service is designed for organisations and teams navigating the intersection of AI, people, and accountability.

Executive Leaders

Leaders deploying AI in hiring, performance, wellbeing, education, or customer decisions who need decision-grade clarity.

Product and Engineering Teams

Teams shipping AI features into regulated or high-trust contexts who need independent validation.

Risk and Compliance Teams

Risk, compliance, and internal audit professionals needing defensible AI oversight and documentation.

AI Vendors

Vendors needing third-party assurance before enterprise procurement or regulatory review.

Frameworks & Standards

Every engagement is anchored to recognised standards and frameworks for accountability and rigour.

Engagement model: Typical engagement runs 4 to 8 weeks depending on system complexity and data access. Work can be structured as a one-off audit, or as an ongoing assurance function with quarterly re-assurance.

Ready to Get Started?

Let\u2019s discuss how this service can support your needs.