Why Should You Care?

Because AI already shapes real decisions about people's careers—and without proper oversight, it can encode bias and cause harm. In a world racing toward automation, ethical AI isn't just a technical issue; it's a moral and strategic one.

Introduction

AI has become common terminology in corporate today. It's not something new, but it's still quite scary as it's a big black box that no one understands but everyone wants to use. In most organizations that I consult for, I see that AI is being used for filtering out people's CVs, evaluating employee performance, allocating workloads to people and in some cases, even managing employee wellbeing. But as AI becomes more powerful and becomes more deeply ingrained in our work-lives, so do the ethical and legal risks.

The problem, however, is that there are no clear and consistent legal frameworks, policies or guidelines to regulate AI at work. The EU was the first large scale political institution to draft and sign into law an AI regulations act. However, most other countries are doing "piece and patch" work. Nowhere is this more apparent than in the US, and those doing business in or for companies in the US should take note of its implications.

In 2025 alone, companies in the US are navigating a fragmented, fast-evolving landscape where AI regulation is inconsistent, high-stakes, and, in some places, still very much undefined. The federal government under President Trump has signaled a rollback of federal level regulatory oversight in order to start prioritizing innovation over regulation and restrictions. Yet, this vacuum hasn't left the corporate world free—in fact, it made doing business in the US a lot more difficult!

A Patchwork of Compliance

With the Biden-era executive order on "Safe and Trustworthy AI" being revoked a few weeks ago and a new mandate focused on "Removing Barriers to American Leadership in Artificial Intelligence," driving federal policy, it seems there has been a shift towards deregulation and chaos. But that doesn't mean companies in the US can relax. Nor does it mean those who do business in the US can keep a blind eye to it.

In fact, I see quite the opposite is happening. The different States in the US have started to step in to fill the void the federal government has created which has resulted in a patchwork of state-level AI laws, each with its own interpretation of fairness, risk, and responsibility. And each contradicting those of other states.

In 2024 alone, lawmakers introduced almost 700 different AI-related bills across 45 of their states. And over 30 states have launched their own independent task forces to study the social, financial and corporate impact of AI.

Taken together the message is clear: regulation is coming, just not in one neat package and you are going to tread fine lines to ensure that you don't break laws if you work in, or for companies in different states in the US.

The New Frontlines of AI Accountability

Let's look at what's happening in Colorado. The Colorado Artificial Intelligence Act, which is set to take effect in early 2026, is aimed at regulating what it calls "consequential decisions" and implications of AI. In other words, those decisions that affect hiring, promotions, or disciplinary action for example. Under this new law, employers must take "reasonable care" to prevent algorithmic discrimination, conduct annual risk assessments, and notify employees when AI is involved in decision-making. But what does "reasonable care" mean if you are not involved in the development and training of these models and merely a user?

In another state, it seems like Illinois is also leading the charge in a more granular way. Under their new bill (HB 3773), which is effective from January 2026, companies are obligated to inform employees when AI is used in employment decisions and are banned from using geographic data as a proxy for protected attributes like race or socioeconomic status. In New York City, they brought Local Law 144 into effect in late 2023 which requires employers using automated hiring tools to conduct independent bias audits every year and publish the results publicly.

These aren't fringe laws though. They reflect a growing consensus that if AI is influencing people's careers, lives, or paychecks, it must be transparent, accountable, and fair.

Understanding "High-Risk" AI

But not all AI tools, platforms and models are created equal. Some tools are innocuous like those helping you to schedule appointments or chatbots helping you with customer service questions. Others, like hiring algorithms or performance assessment systems, hold real power over people's lives. These are labeled High-Risk AI Systems (HRAIS). They can affect whether someone gets a job, a raise, or a second chance and when used carelessly, they can perpetuate bias or institutionalize discrimination.

It's therefore no surprise then that ten other states are considering similar bills to that of Colorado and New York's. So if your organization uses AI to make employment decisions, or to manage people, you're no longer just a tech adopter. You're a regulated entity.

Developing an AI Compliance Framework

Let's be blunt: if your company doesn't already have an AI compliance framework in place, it's behind and you are probably already open for litigation!

In a recent report by Deloitte, they found that over half of global organizations report that they don't have a formal AI use policy or strategy in place. That's not just a policy gap—it's a legal liability and a reputational risk waiting to happen.

So it's important to start getting an AI Compliance framework in place, and to ensure that it meets not only your needs but covers all your bases. So what would such a framework look like?

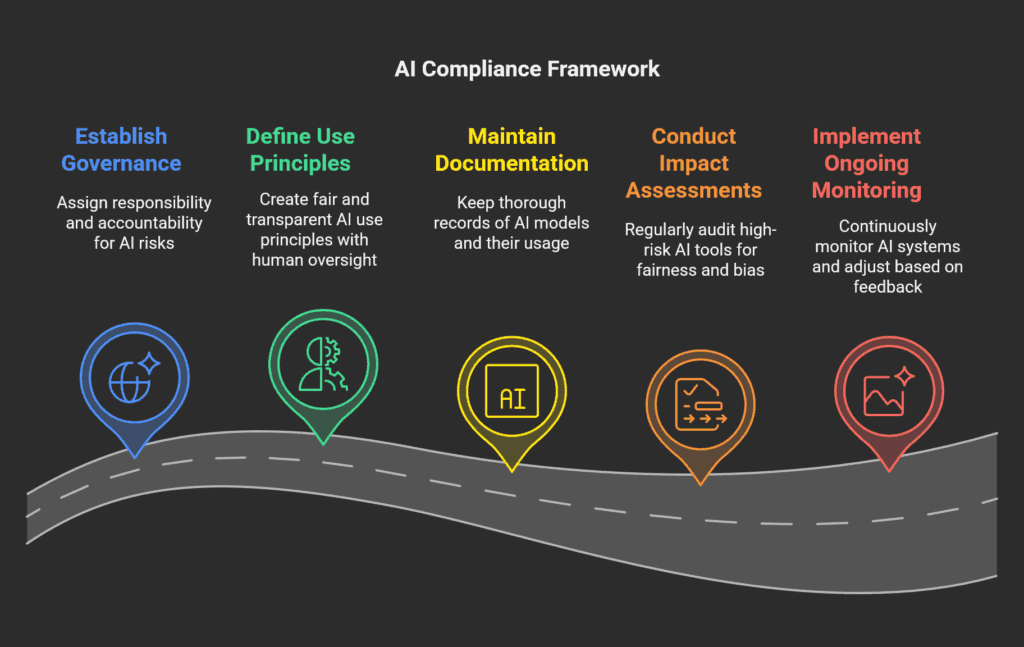

Those we are building for our clients tend to focus on 5 areas:

- Having Clear Governance. Governance starts with assigning responsibility and accountability. Who owns AI risk in your company? Is it HR? Is it Legal? Is it ICT? Someone needs to be accountable and formal policies and procedures around this need to be in place.

- Define Responsible Use Principles. Next, you need to define what responsible AI use is within your context. These principles need to be fair, transparent and ensure accountability and above all else, make sure there is human oversight throughout the AI use value chain. Make sure that these principles are more than just corporate values or posters on a wall. They need to be built into the entire organization's workflows.

- Keep Thorough Documentation. Track where your AI models come from, what data they're trained on, and how they're used. Maintain "model cards" like you would employee files.

- Conduct Impact Assessments. Regularly audit your high-risk tools. Run stress tests before launching AI in real-world environments and make sure their outcomes are fair and free of bias.

- Ongoing Monitoring. AI is never "set it and forget it." Monitor systems post-deployment. Collect feedback from employees and candidates and adjust your workflows and processes accordingly.

Building an Ethical AI Framework

AI may drive efficiency, and help you improve the quality of your work but—and I say this again BUT—it doesn't replace human dignity. That's why regulations are increasingly emphasizing worker rights and your policies and procedures should reflect that. The five principles to consider:

- User Privacy. All AI tools collect and process personal data in some way or form. Are you protecting it?

- Informed Consent. Do employees know when AI is used and for what purpose? You have to get their explicit permission before you can process their data with AI.

- Bias Mitigation. Are your AI tools reinforcing internal or external biases? Are they built around outdated patterns and practices? Are you 100% sure they are bias free?

- Ensure Accountability. Who is responsible for and will answer questions when things go wrong? Is this information publicly available?

- Appeal Process. Can employees challenge AI-driven decisions? Is there a process in place to appeal and review decisions made via or with AI?

If your company can't answer these questions clearly, you are not ready for using AI responsibly in your organization and you are at a very high risk of getting into a lot of hot water.

Why Ethical AI Is a Competitive Edge

Just like with any corporate branding activity, the way in which you manage AI will eventually affect how people view your organization. There is a growing belief, especially among younger workers, that how a company uses AI is a clear reflection of their values and how they treat their people.

Companies that adopt ethical, transparent AI frameworks aren't just avoiding lawsuits—they are building a corporate brand that signifies trust, not just with employees, but also with consumers of their products and services. Those who advocate for the fair and transparent use of AI strengthen their corporate culture and they are showing leadership in a world where responsible innovation is the only kind that lasts.